⚠️ Enterprise Product · This is a commercial platform for teams. For free open-source tools, visit Open Source Tools →

Marisma

Ephemeral sandbox for AI agents. Hardware-level KVM isolation. 40-60% ROI vs Kubernetes.

AI Hallucinates. Production Breaks.

Today's options: Run skills on your server (agent corrupts DB), use Kubernetes (shared kernel = exploit = game over), or trust OpenAI cloud (data in Microsoft, zero guarantees). Marisma offers a better way.

How Does Marisma Work?

Skill Request

AI agent requests to execute a skill (scraper, compute, network)

Spawn Unikernel

Marisma creates ephemeral unikernel (2-5MB RAM) in <300ms

Execute Skill

Skill runs isolated (KVM hardware-level) without host access

Return & Destroy

Result returned, unikernel destroyed (<50ms). Zero traces.

⚡ Total time: ~500ms (spawn + execution + cleanup)

Each skill runs in its own hardware-isolated unikernel (KVM), ensuring radical security without compromising performance. No containers, no shared kernels, no escape paths.

Radical Advantages

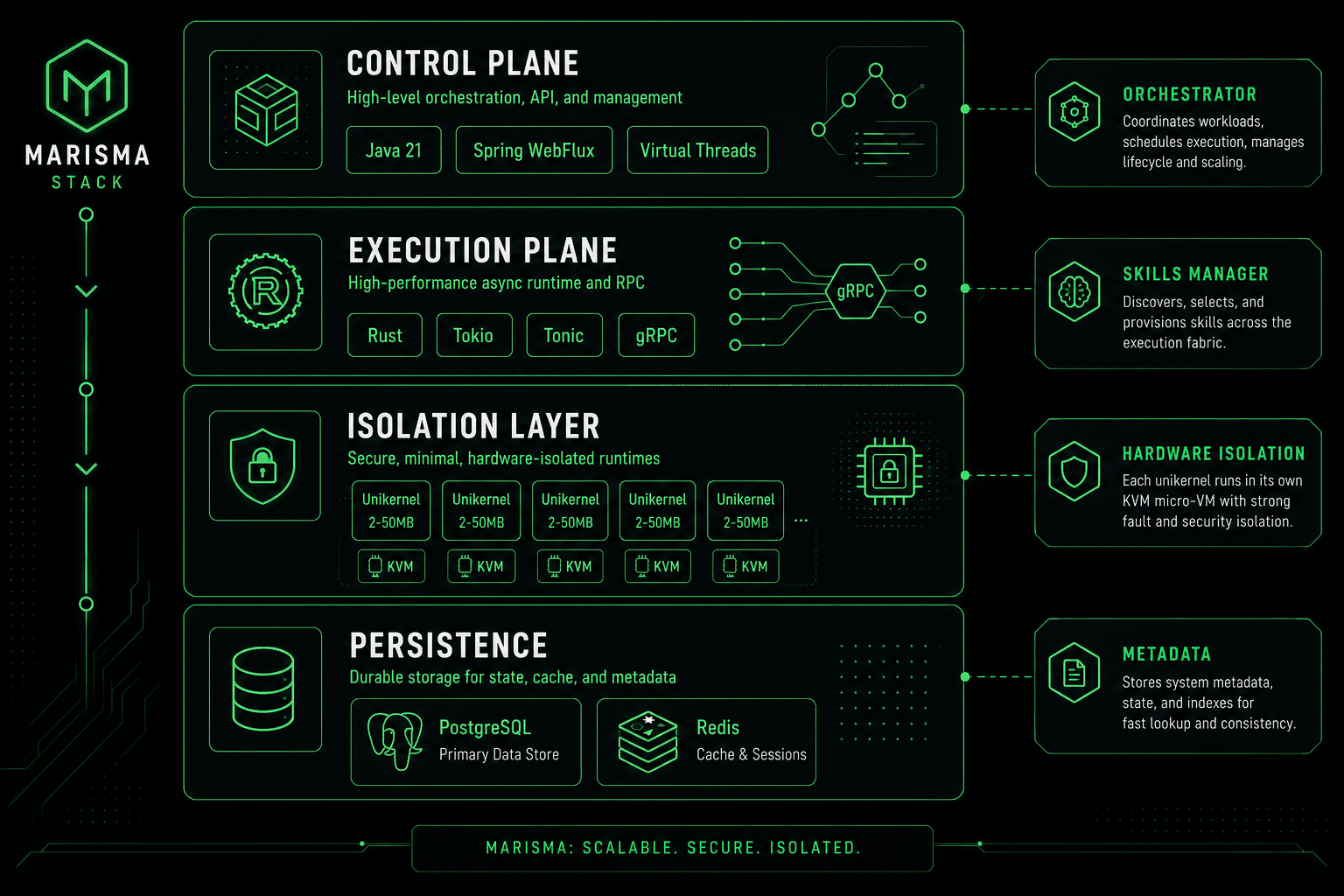

Click to view in full size · Marisma ephemeral sandbox architecture

🔒 Hardware Isolation

Docker/K8s: NameSpace (software). Shared kernel. 1 exploit = system compromised.

Marisma: KVM (hardware). Physical barrier. Impossible to escape.

⚡ Extreme Density

Docker/K8s: ~100 MB per container

Kubernetes Pod: 500 MB - 4 GB

Marisma Unikernel: 2-50 MB

Same hardware, 10x capacity. 100+ skills in 16GB RAM.

📜 Immutable Traceability

Marisma captures exact context of each AI decision:

- • Timestamp & skill executed

- • Input/output hashes

- • Cryptographic signature

Auditors can verify: "Why did AI approve that refund?" Full context, cryptographically proven.

Technology Stack

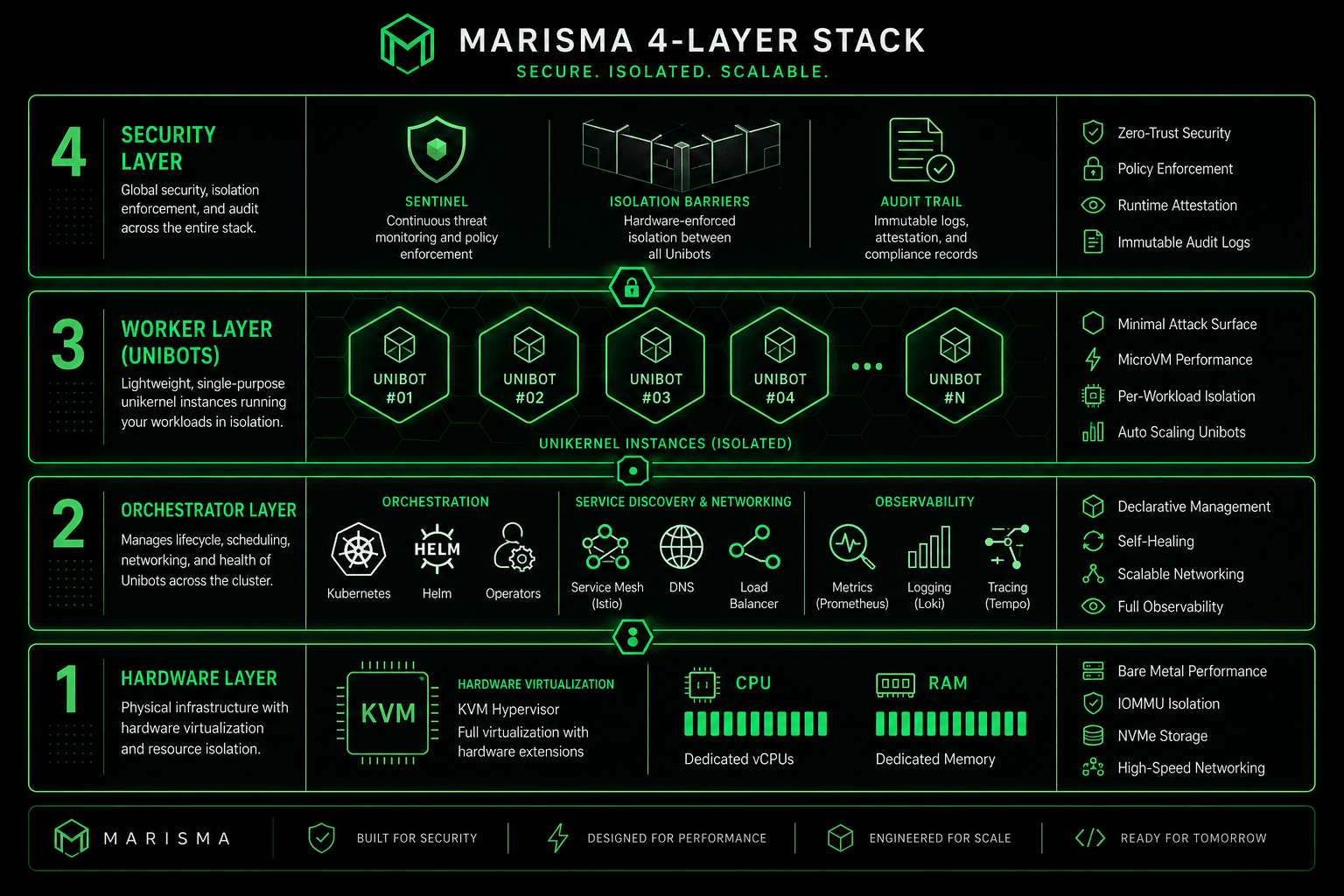

Click to view in full size · Enterprise-grade 4-layer architecture

Marisma leverages modern systems programming languages and hardware virtualization to deliver uncompromising security and performance. The architecture consists of multiple isolation layers, each designed to prevent privilege escalation and ensure cryptographic auditability.

⚡ High-Performance Core

Built with systems programming languages optimized for concurrent workloads and minimal memory footprint.

🔒 Multi-Layer Isolation

Hardware-level virtualization combined with unikernel technology for mathematical security guarantees.

📊 Enterprise Persistence

Production-grade database and caching infrastructure optimized for high-throughput audit trails.

See Marisma in Action

Marisma is an enterprise platform designed for production deployments. Schedule a personalized demo to see how hardware-level isolation and extreme density can transform your AI infrastructure.

🎯 Custom Demo

45-minute technical walkthrough tailored to your use case. See Marisma orchestrating real workloads with cryptographic audit trails.

📊 Architecture Review

Deep dive into the 4-layer isolation model, unikernel execution, and how Marisma integrates with your existing infrastructure.

💼 ROI Analysis

Compare costs vs Kubernetes/Docker. Analyze density improvements, security guarantees, and compliance benefits for your scenario.

Use Cases

🏦 Financial Agent

Requirement: Analyze private portfolio without sending to OpenAI.

With Marisma:

- ✓ Agent runs locally in Marisma

- ✓ Data never leaves infrastructure

- ✓ Decision auditable + cryptographically valid

ROI: 50% savings + automatic compliance

🛒 Skills Marketplace

Requirement: Allow third-parties to run skills without breaking platform.

With Marisma:

- ✓ Each skill = isolated island (hardware guarantee)

- ✓ If skill fails, others continue (no contagion)

- ✓ Cryptographic validation = impossible fraud

ROI: Monetize marketplace without risks

📊 Supply Chain Audit

Requirement: AI decisions auditable for regulators.

With Marisma:

- ✓ ExecutionLifecycle = exact context snapshot

- ✓ Cryptographic signature = legal proof

- ✓ Replay: "Show me decision from 2026-04-25 14:30"

ROI: Compliance + automatic legal defense

Plans & Pricing

Marisma is available as part of Enterprise Professional and Enterprise plans. Check our complete pricing.

Stop Playing Russian Roulette with AI

Join teams building autonomous AI systems with mathematical security guarantees, not wishful thinking.