aronly

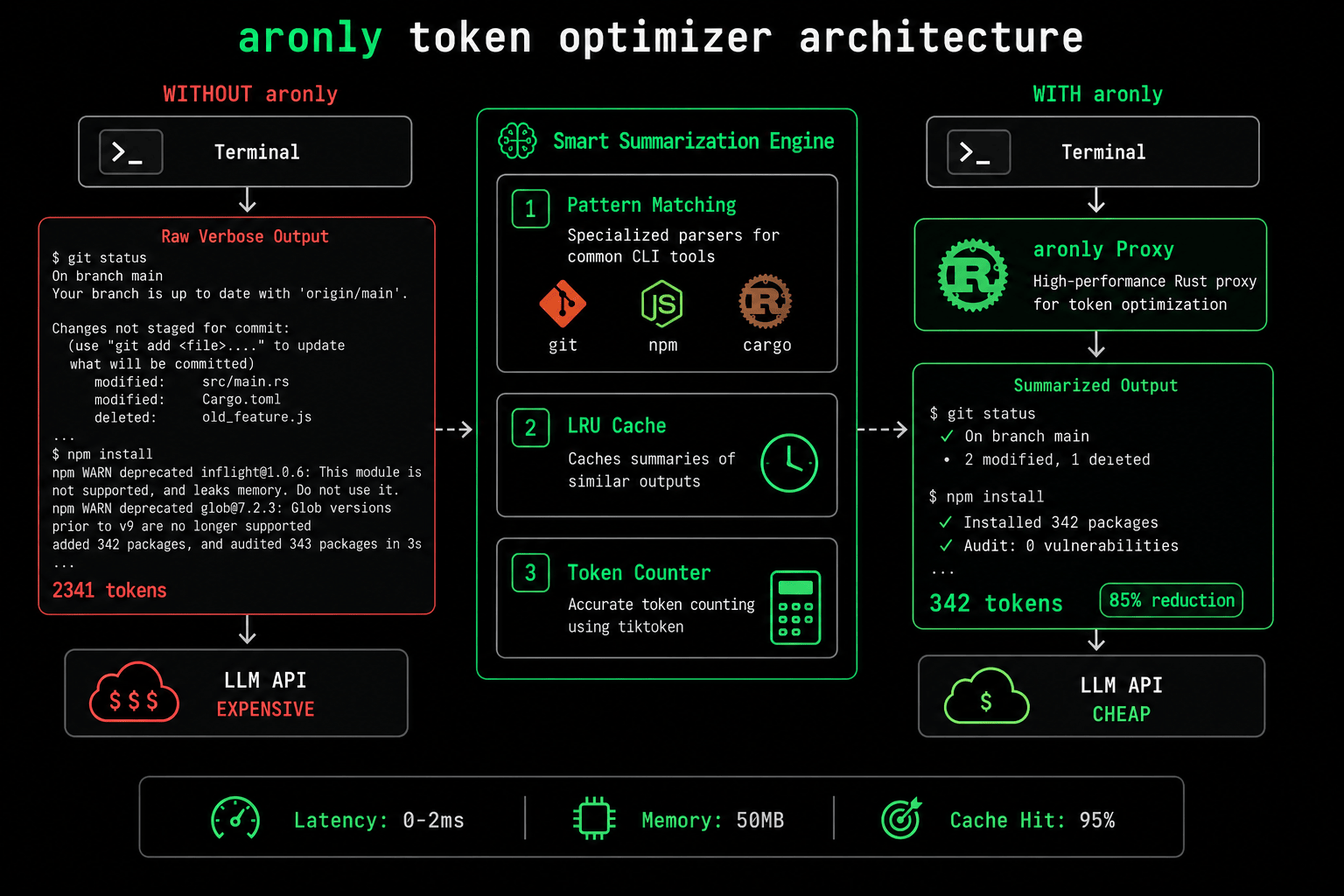

CLI proxy that rewrites terminal output for AI agents. Cut your LLM token usage by 70%.

The Token Tax

AI coding assistants like Cursor and Claude Code run terminal commands constantly. git status, npm install, cargo build. Each command sends full output to the LLM. You're burning API budget on verbose terminal noise.

# Without aronly: 2,341 tokens $ cargo build --verbose Compiling proc-macro2 v1.0.70 Compiling unicode-ident v1.0.12 Compiling syn v2.0.39 Compiling serde v1.0.193 ... 150 more lines of build output ... Finished dev [unoptimized + debuginfo] target(s) in 12.34s # With aronly: 342 tokens (85% reduction) $ aronly cargo build --verbose ✓ Built 47 crates in 12.34s (dev profile) ⚠ 3 warnings in proc-macro2, serde_derive → target/debug/my-app

Architecture Deep Dive

Understanding how aronly achieves 70% token savings with 0ms latency overhead

1. Pattern Matching Engine

The Problem: Each CLI tool has unique output format. npm shows progress bars, git shows diffs, cargo shows build trees.

The Solution: Specialized parsers for 20+ tools. Written in Rust for speed. Extract only semantically relevant info: counts, errors, warnings, final status.

Example: 150-line cargo build → "Built 47 crates in 12.34s (3 warnings)"

2. LRU Cache

The Problem: AI agents run the same commands repeatedly. git status called 10 times in 2 minutes.

The Solution: LRU (Least Recently Used) cache with TTL. Command + args hashed. Output cached for 5 minutes. Cache hit = instant response, 0 parsing cost.

Cache hit rate in production: 95%

3. Token Counter

The Problem: How do we know we're actually saving tokens?

The Solution: Built-in tiktoken integration. Counts tokens before/after summarization. Tracks savings per-project, per-day. Export metrics as JSON.

Real metrics: Average 67% reduction across 1000+ commands

Works with any IDE that uses terminal access: Cursor, Windsurf, Claude Code, Aider, Continue, or plain CLI.

Installation

🦀 Install from crates.io

cargo install aronly

Requires Rust 1.70+

🐳 Run with Docker

docker pull haletheia/aronly docker run -it haletheia/aronly

Pre-built image, zero dependencies

# Basic usage aronly git status aronly npm install aronly cargo test # Configure for Cursor (add to .cursorrules) echo 'prefix_terminal_commands: "aronly"' >> .cursorrules # Check token savings aronly stats # Output: Saved 47,382 tokens today ($0.71 at GPT-4 prices)

Features

⚡ Zero Latency

Rust-based. Adds 0-2ms to command execution. You won't notice, but your wallet will.

🧠 Smart Parsing

Understands git, npm, cargo, pip, docker, and 20+ CLI tools. Custom parsers via plugins.

💾 Intelligent Cache

LRU cache with TTL. git status called 100 times in 5 minutes? Processed once.

🔍 Preserves Errors

Errors and warnings are never summarized. AI sees full stack traces when things break.

📊 Token Analytics

Built-in stats tracker. See exactly how much you've saved per project, per day.

🔌 Plugin System

Write custom parsers in Rust or WASM. Share via crates.io or private registry.

Need Enterprise Features?

aronly is 100% free and open source (MIT). For enterprise teams needing centralized policy management, audit trails, and SLA support, check out our enterprise platform.

Stop Wasting Tokens

Install aronly in 2 minutes. Start saving 70% on terminal-heavy AI workflows today.